Notes of Diffusion Models

Table of Contents

- Revisit of Denoising Diffusion Probabilistic Models (DDPM)

- Connection with DDIM

- Connection with Score-based DM

- Noise Conditional Score Networks (NCSN)

Revisit of Denoising Diffusion Probabilistic Models (DDPM)

Some good reviews:

- How diffusion models work: the math from scratch

- What are Diffusion Models?

- Generative Modeling by Estimating Gradients of the Data Distribution

- Understanding Diffusion Models: A Unified Perspective

- How to Train Your Energy-Based Models

DDPM Formulation

Given the data distribution $\x_0\sim q(\x_0)$ which is unknown, we want to learn an approximation $p_\theta (\x_0)$ that we can sample from. It is similar to variational autoencoder (VAE) or hierarchical VAE in the form, e.g., it also has encoding process (forward process) and decoding process (reverse process) and minimizes ELBO, but with multiple high dimensional latent variables.

Diffusion models are latent variable models with the formulation (Markov chain)

\[p_\theta(\x_0) = \int p_\theta(\x_{0:T})d\x_{1:T} \space \text{where} \space p_\theta(\x_{0:T}) = p(\x_T)\prod_{t=1}^{T}p_\theta(\x_{t-1}\mid\x_t).\]The latent variables are ${\x_1,…,\x_T}$ with the same dimensionality as the data $\x_0$ and their joint $p_\theta(\x_{0:T})$ is called the reverse process (decoding) starting at $p(\x_T)=\N(0,\I)$. The approximate posterior $q(\x_{1:T}\mid\x_0)$, called the forward process, is fixed to another Markov chain

\[q(\x_{1:T}\mid\x_0) = \prod_{t=1}^{T}q(\x_t\mid\x_{t-1}).\]Compared to other latent variable models, e.g., VAE, it has no learnable parameters in the approximate posterior $q$ (encoding). In this process, the data $\x_0$ is transformed to $\x_T$ by gradually adding Gaussian noise. To recover the data distribution, the ELBO is maximized as

\[\begin{align*} \max_{\theta} \log p_\theta(\x_0) &= \log \int \frac{p_\theta(\x_{0:T})q(\x_{1:T}\mid\x_0)}{q(\x_{1:T}\mid\x_0)}d\x_{1:T}\\ &= \log \mathbb{E}_{\x_{1:T}\sim q(\x_{1:T}\mid\x_0)}\sbr{\frac{p_\theta(\x_{0:T})}{q(\x_{1:T}\mid\x_0)}} \\ &\geq \mathbb{E}_{\x_{1:T}\sim q(\x_{1:T}\mid\x_0)}\sbr{\log \frac{p_\theta(\x_{0:T})}{q(\x_{1:T}\mid\x_0)}}\\ &= -KL\sbr{q(\x_{1:T}\mid\x_0)\mid p_\theta(\x_{0:T})}. \end{align*}\]DDPM Forward Process (Encoding)

The original data $\x_0\sim q(\x_0)$ and the Markov chain assumes we add noise to the data $\x_0$ in each time step $t\in[1,T]$ with transition kernel $q(\x_t\mid\x_{t-1})$ which is usually handcrafted as

\[q(\x_t\mid\x_{t-1}) = \N\nbr{\x_t;\sqrt{1-\beta_t}\x_{t-1},\beta_t\I}\]where $\beta_t\in (0,1)$ is a hyperparameter. A closed form of dependence according to the reparameterization trick:

\[\begin{align*} \x_t &= \sqrt{1-\beta_t}\x_{t-1} + \sqrt{\beta_t}\bm{\epsilon}_{t-1} \quad \text{where} \quad \bm{\epsilon}_{t-1}\sim \N\nbr{\bm{0},\bm{I}}\\ &= \sqrt{\alpha_t}\x_{t-1} + \sqrt{1-\alpha_t}\bm{\epsilon}_{t-1} \quad \text{where} \quad \alpha_t = 1-\beta_t \end{align*}\]Furthermore, with the addition of two Gaussian random variable, we can trace back to the data $\x_0$

\[\begin{align} \x_t &= \sqrt{\alpha_t\alpha_{t-1}}\x_{t-2} + \sqrt{\alpha_t-\alpha_t\alpha_{t-1}}\bm{\epsilon}_{t-2} + \sqrt{1-\alpha_t}\bm{\epsilon}_{t-1} \nonumber\\ &= \sqrt{\alpha_t\alpha_{t-1}}\x_{t-2} + \sqrt{1-\alpha_{t}\alpha_{t-1}} \bm{\epsilon} \quad \text{where} \quad \bm{\epsilon}\sim \N\nbr{\bm{0},\bm{I}}\nonumber\\ & \qquad ...\nonumber\\ &= \sqrt{\bar{\alpha}_t}\x_{0} + \sqrt{1-\bar{\alpha}_{t}} \bm{\epsilon} \end{align}\\\]where $\bar{\alpha}_ t = \prod_{i=1}^t \alpha_i $. Usually, $\alpha_i$ will decrease along with $t$, and therefore $\bar{\alpha}_t \rightarrow 0$ when $t \rightarrow \infty$.

DDPM Reverse Process (Decoding)

To generate a new sample or reverse from $\x_T\sim\N(0,\I)$, we need to know $q(\x_{t-1} \mid \x_t)$ which is unavailable in practice for decoding. However, we know it is also Gaussian according to the Bayes’ theorem. To make it tractable, we use $q(\x_{t-1} \mid \x_t, \x_0)$ which is conditioned on $\x_0$, which can be written as

\[q(\x_{t-1} \vert \x_t, \x_0) = q(\x_t\mid\x_{t-1},\x_0)\frac{q(\x_{t-1}\mid\x_0)}{q(\x_t\mid\x_0)} = \mathcal{N}(\x_{t-1}; \color{blue}{\tilde{\bm{\mu}}}(\x_t, \x_0), \color{red}{\tilde{\beta}_t} \mathbf{I}),\]where

\(\begin{align} \tilde{\beta}_t &= \frac{1 - \bar{\alpha}_{t-1}}{1 - \bar{\alpha}_t} \cdot \beta_t \nonumber\\ \tilde{\bm{\mu}}_t (\x_t, \x_0) &= \frac{\sqrt{\alpha_t}(1 - \bar{\alpha}_{t-1})}{1 - \bar{\alpha}_t} \x_t + \frac{\sqrt{\bar{\alpha}_{t-1}}\beta_t}{1 - \bar{\alpha}_t} \x_0 \nonumber\\ &= \frac{1}{\sqrt{\alpha_t}} \Big( \x_t - \frac{1 - \alpha_t}{\sqrt{1 - \bar{\alpha}_t}} \bm{\epsilon}_t \Big) = \tilde{\bm{\mu}}_t \end{align}\) with $\x_0 = \frac{1}{\sqrt{\bar{\alpha}_t}}(\x_t - \sqrt{1 - \bar{\alpha}_t}\bm{\epsilon}_t)$ (derived from Eq. (1)). The details of derivations can be found here (complete the square).

Note that $q(\x_{t-1} \mid \x_t, \x_0) = q(\x_{t-1} \mid \x_t)$ due to Markovian property. The decoder $p_\theta$ with parameters $\theta$ is used to approximate $q(\x_{t-1} \mid \x_t, \x_0)$ with the same form as $q(\x_{t-1} \mid \x_t, \x_0)$ (Gaussian), i.e.,

\[p_\theta(\x_{t-1} \mid \x_{t}) = \N\nbr{\x_{t-1}\mid \bm{\mu}_\theta(\x_t, t), \bm{\Sigma}_\theta(\x_t, t)}.\]Training: ELBO

Our objective is to maximize the ELBO $-KL\sbr{q(\x_{1:T}\mid\x_0)\mid p_\theta(\x_{0:T})}$ which is equivalent to minimize the negative ELBO

\[\begin{align*} L &= \mathbb{E}_{\x_{1:T}\sim q(\x_{1:T}\mid\x_0)}\sbr{\log \frac{q(\x_{1:T}\mid\x_0)}{p_\theta(\x_{0:T})}}\\ &= \mathbb{E}_{\x_{1:T}\sim q(\x_{1:T}\mid\x_0)}\sbr{\log \frac{\prod_{t=1}^{T} q(\x_{t}\mid\x_{t-1})}{p_\theta(\x_T)\prod_{t=1}^{T} p_\theta(\x_{t-1}\mid\x_{t})}}\\ &= \mathbb{E}_{\x_{1:T}\sim q(\x_{1:T}\mid\x_0)}\sbr{-\log p_\theta(\x_T) + \sum_{t=2}^T\log \frac{q(\x_t\mid\x_{t-1})}{p_\theta(\x_{t-1}\mid\x_t)} + \log\frac{q(\x_1\mid\x_0)}{p_\theta(\x_0\mid\x_1)}}\\ &= \mathbb{E}_{\x_{1:T}\sim q(\x_{1:T}\mid\x_0)}\sbr{-\log p_\theta(\x_T) + \sum_{t=2}^T\log \nbr{\frac{q(\x_{t-1}\mid\x_{t},\x_0)}{p_\theta(\x_{t-1}\mid\x_t)}\frac{q(\x_t\mid\x_0)}{q(\x_{t-1}\mid\x_0)}} + \log\frac{q(\x_1\mid\x_0)}{p_\theta(\x_0\mid\x_1)}}\\ &= \mathbb{E}_{\x_{1:T}\sim q(\x_{1:T}\mid\x_0)}\sbr{-\log p_\theta(\x_T) + \sum_{t=2}^T\log \frac{q(\x_{t-1}\mid\x_{t},\x_0)}{p_\theta(\x_{t-1}\mid\x_t)} + \log \frac{q(\x_T\mid\x_0)}{q(\x_{1}\mid\x_0)} + \log\frac{q(\x_1\mid\x_0)}{p_\theta(\x_0\mid\x_1)}}\\ &= \mathbb{E}_{\x_{1:T}\sim q(\x_{1:T}\mid\x_0)}\sbr{\log \frac{q(\x_T\mid\x_0)}{p(\x_T)} + \sum_{t=2}^T\log \frac{q(\x_{t-1}\mid\x_{t},\x_0)}{p_\theta(\x_{t-1}\mid\x_t)} - \log p_\theta(\x_0\mid\x_1)}\\ &= \underbrace{KL\sbr{q(\x_T\mid\x_0)\mid p(\x_T)}}_{L_T} + \sum_{t=2}^T \underbrace{\mathbb{E}_{\x_t\sim q(\x_t\mid\x_0)}\sbr{KL\sbr{q(\x_{t-1}\mid\x_{t},\x_0)\mid p_\theta(\x_{t-1}\mid\x_t)}}}_{L_{t}} - \underbrace{\mathbb{E}_{\x_{1}\sim q(\x_{1}\mid\x_0)}\sbr{\log p_\theta(\x_0\mid\x_1)}}_{L_0} \end{align*}\]Parameterization on $L_t$

For $L_t$, we assume the decoder $p_\theta$ has the same form as $q(\x_{t-1}\mid\x_t, \x_0)$ (see Eq. (2)), and the mean $\bm{\mu}_\theta$ is parameterized as

\[\bm{\mu}_\theta = \frac{1}{\sqrt{\alpha_t}} \nbr{\x_t - \frac{1 - \alpha_t}{\sqrt{1 - \bar{\alpha}_t}} \bm{\epsilon}_\theta(\x_t, t)}\] \[p_\theta(\x_{t-1}\mid\x_t) = \N\nbr{\x_{t-1}; \frac{1}{\sqrt{\alpha_t}} \Big( \x_t - \frac{1 - \alpha_t}{\sqrt{1 - \bar{\alpha}_t}} \bm{\epsilon}_\theta(\x_t, t) \Big), \bm{\Sigma}_\theta(\x_t, t)}\]In the above case, the $\bm{\epsilon}_\theta(\x_t, t)$ is the output of the backbone model (usually U-net) which is used to approximate the added noise $\bm{\epsilon}_t$ in the forward process.

The KL divergence between two Gaussian:

\[_{KL}(p||q) = \frac{1}{2}\left[\log\frac{|\Sigma_q|}{|\Sigma_p|} - d + (\bm{\mu_p}-\bm{\mu_q})^T\Sigma_q^{-1}(\bm{\mu_p}-\bm{\mu_q}) + tr\left\{\Sigma_q^{-1}\Sigma_p\right\}\right]\]We set $\bm{\Sigma}_\theta(\x_t, t) = \sigma_t^2\bm{I}$ where $\sigma_t^2 = \tilde{\beta}_t$ or $\sigma_t^2 = \beta_t$ \(\begin{align} L_t &= \mathbb{E}_{\x_0, \bm{\epsilon}} \sbr{\frac{1}{2\sigma_t^2} \| \tilde{\bm{\mu}}_t(\x_t, \x_0) - \bm{\mu}_\theta(\x_t, t) \|^2 } \nonumber\\ &= \mathbb{E}_{\x_0, \bm{\epsilon}} \sbr{\frac{ (1 - \alpha_t)^2 }{2 \alpha_t (1 - \bar{\alpha}_t) \sigma_t^2} \|\bm{\epsilon}_t - \bm{\epsilon}_\theta(\x_t, t)\|^2} \nonumber\\ &= \mathbb{E}_{\x_0, \bm{\epsilon}} \sbr{\frac{ (1 - \alpha_t)^2 }{2 \alpha_t (1 - \bar{\alpha}_t) \sigma_t^2} \|\bm{\epsilon}_t - \bm{\epsilon}_\theta(\sqrt{\bar{\alpha}_t}\x_{0} + \sqrt{1-\bar{\alpha}_{t}} \bm{\epsilon}_t, t)\|^2} \\ \end{align}\)

Eq. (3) is further reduced to

\(\begin{equation} L_{\text{simple}}(\theta) := \mathbb{E}_{\x_0, \bm{\epsilon}} \sbr{\|\bm{\epsilon}_t - \bm{\epsilon}_\theta(\sqrt{\bar{\alpha}_t}\x_{0} + \sqrt{1-\bar{\alpha}_{t}} \bm{\epsilon}_t, t)\|^2}, \end{equation}\) where the weight term is removed for better sample quality.

From another perspective, $\bm{\mu}_\theta$ can be parameterized as

\[\bm{\mu}_\theta = \frac{\sqrt{\alpha_t}(1 - \bar{\alpha}_{t-1})}{1 - \bar{\alpha}_t} \x_t + \frac{\sqrt{\bar{\alpha}_{t-1}}\beta_t}{1 - \bar{\alpha}_t} \tilde{\x}_\theta(\x_t,t),\]where $\tilde{\x}_\theta(\x_t,t)$ is the output of the backbone model (U-net) which is used to predict the data $\x_0$ directly.

$L_T$ and $L_0$

$L_T$ is considered a constant and ignored in the training (if $\beta_t$ is fixed). $L_0$ can be regraded as reconstruction error (VAE settings), i.e., $t=1$ in Eq. (4). More details can be found in the DDPM paper Sec. 3.3.

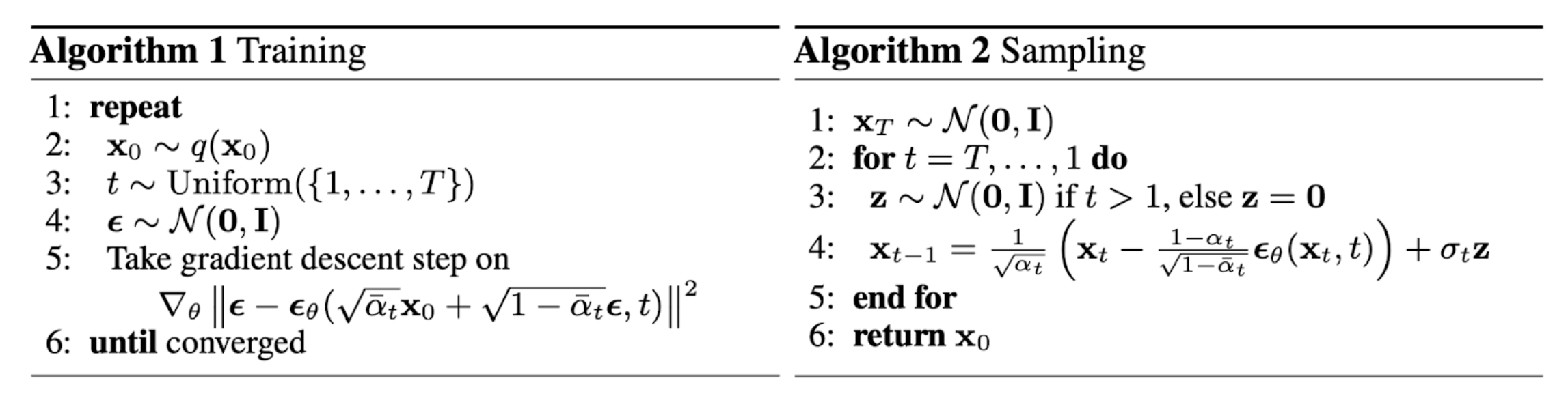

Implementation

Connection with DDIM

DDIM is proposed to accelerate the inference of DDPM. The formulation is slightly different but the training is proved to be the same.

The forward process is non-Markovian. Consider a family $Q$, indexed by a real vector $\sigma\in\R^T$, the joint is now conditioned on $\x_0$: $q_\sigma(\x_{1:T}\mid\x_0) = q_\sigma(\x_T\mid\x_0)\prod_{i=2}^Tq_\sigma(\x_{t-1}\mid\x_{t},\x_0)$ where

\[\begin{equation} q_\sigma(\x_{t-1}\mid\x_{t},\x_0) = \N\nbr{\sqrt{\bar{\alpha}_{t-1}}\x_0 + \sqrt{1-\bar{\alpha}_{t-1}-\sigma_t^2}\frac{\x_t-\sqrt{\bar{\alpha}_{t}}\x_0}{\sqrt{1-\bar{\alpha}_{t}}},\sigma_t^2\bm{I}} \end{equation}\]The Eq. (4) is selected to satisfy the joint and $q_\sigma(\x_t\mid\x_0) = \N\nbr{\sqrt{\bar{\alpha}_t}, (1-\bar{\alpha}_t)\bm{I}}$.

The forward process is obtained by $q_\sigma(\x_t\mid\x_{t-1},\x_0) = \frac{q_\sigma(\x_{t-1}\mid\x_{t},\x_0)q_\sigma(\x_t\mid\x_0)}{q_\sigma(\x_{t-1}\mid\x_0)}$. It is also Gaussian and the magnitude controls the randomness in the forward process. If we set $\sigma_t = 0$, the forward process becomes deterministic.

The reverse process is similar to the DDPM. The joint $p_\theta(\x_{0:T})$ is used to approximate the $q_\sigma(\x_{t-1}\mid\x_{t},\x_0)$ by minimization of KL-divergence and the training objective is the same as $L_{\text{simple}}$ of DDPM (Theorem 1 in DDIM paper). We can let the model (U-net) predict either $\x_0$ directly or the noise $\bm{\epsilon}_t$. For example (noise prediction),

\[f_\theta(\x_t) = \frac{\x_t-\sqrt{1-\bar{\alpha}_t}\bm{\epsilon}_{\theta}(\x_t,t)}{\sqrt{\bar{\alpha}_t}} = \tilde{\x}_0\]According to Eq. (5),

\[\begin{align} \x_{t-1} &= \sqrt{\bar{\alpha}_{t-1}}\tilde{\x}_0 + \sqrt{1-\bar{\alpha}_{t-1}-\sigma_t^2}\frac{\x_t-\sqrt{\bar{\alpha}_{t}}\tilde{\x}_0}{\sqrt{1-\bar{\alpha}_{t}}} + \sigma_t\bm{\epsilon}\\ &= \sqrt{\bar{\alpha}_{t-1}}\nbr{\frac{\x_t-\sqrt{1-\bar{\alpha}_t}\bm{\epsilon}_\theta(\x_t,t)}{\sqrt{\bar{\alpha}_{t}}}} + \sqrt{1-\bar{\alpha}_{t}-\sigma_t^2}\bm{\epsilon}_\theta(\x_t,t) + \sigma_t\bm{\epsilon} \end{align}\]$\sigma_t$ is set to $0$ in DDIM and the forward process becomes deterministic and the reverse process becomes implicit probabilistic model.

Accelerated Inference

In DDPM, we need to go through every forward steps to sample in backward process due to Markovian property. DDIM proposes to use a subset of total time steps to sample in reverse process, i.e., ${\x_{\tau_1},…,\x_{\tau_S}}\subset{\x_1,…,\x_T}$. From another perspective, the interval between two time steps is increased in the reverse process, e.g., $\tau_{s+1}-\tau_s = \Delta\tau \geq 1$ where $\Delta\tau$ is a hyperparameter. Now, we are sampling from $q_\sigma(\x_{t-\Delta_\tau}\mid\x_{t},\x_0)$ iteratively. Hence, the reverse process is accelerated. Why it can jump? It is essentially an ODE. Larger jump means more discretization error.

Connection with Score-based DM

Score and Score Matching

In order to learn a distribution $p_{data}$, we need to represent it first. Usually, we define

\[p_\theta(\x) = \frac{e^{-f_\theta(\x)}}{Z_\theta}\]where it satisfies $p_\theta(\x) \geq 0$ and $\int p_\theta(\x)d\x = 1$. For a dataset ${\x_1,…,\x_N}$, we cannot use MLE to estimate the parameters $\theta$ because the likelihood is intractable due to the normalizing factor $Z_\theta$. Instead, we can use the score function to estimate the parameters. Score of a probability density function $p(\x)$ is defined as $\nabla_{\x}\log p(x)$. For example, if $p$ is Gaussian distributed, $\nabla_{\x}\log p(\x) = -\frac{\x-\bm{\mu}}{\sigma^2} = -\frac{\bm{\epsilon}}{\sigma}$ (standardization). More details can be found in How to Train Your Energy-Based Models.

We want to learn a score model $\bm{s}_\theta(\x)=\nabla_{\x}\log p_\theta = -\nabla_{\x}f_{\theta}(\x)\approx \nabla_{\x}\log p_{data}$. Then, we can draw samples from the approximated score function. Can we draw samples directly from score function? Yes, Langevin Dynamics. To train $\bm{s}_\theta$, we minimize the Fisher Divergence, which is also called score matching,

\[\begin{equation} D_F\sbr{p_{data}\mid p_\theta} = \mathbb{E}_{p_{data}}\sbr{\frac{1}{2}\left\lVert\nabla_{\x}\log p_{data}(\x) - \bm{s}_\theta(\x)\right\rVert_2^2}. \end{equation}\]However, $p_{data}$ is unknown. Fortunately, it can be replaced by the derivative of $\bm{s}_\theta(\x)$ to eliminate the unknown $p_{data}$ (see the derivation from Estimation of Non-Normalized Statistical Models by Score Matching),

\[\begin{equation} D_F\sbr{p_{data}\mid p_\theta} = \mathbb{E}_{p_{data}}\sbr{Tr(\nabla_{\x} \bm{s}_\theta(\x)) + \frac{1}{2}\left\lVert \bm{s}_\theta(\x)\right\rVert_2^2} + \text{Constant}. \end{equation}\]One critical problem is that the score matching is not scalable to deep networks and high dimensional data due to the derivative term.

DDPM to Score Matching (Tweedie’s Formula)

From Eq. (1), we have the forward process $\x_t\sim\N(\x_t \mid \sqrt{\bar{\alpha}_t}\x_0, (1-\bar{\alpha}_t)\I)$. By Tweedie’s formula, the mean can be approximated as

\[\begin{align*} \sqrt{\bar{\alpha}_t}\x_0 &= \x_t + (1-\bar{\alpha}_t)\nabla_{\x_t}\log p(\x_t)\\ \x_0 &= \frac{\x_t}{\sqrt{\bar{\alpha}_t}} + \frac{1-\bar{\alpha}_t}{\sqrt{\bar{\alpha}_t}}\nabla_{\x_t}\log p(\x_t).\\ \end{align*}\]In this case, the $\tilde{\bm{\mu}}_t (\x_t, \x_0)$ can be parameterized as

\[\tilde{\bm{\mu}}_t (\x_t, \x_0) = \frac{1}{\sqrt{\alpha}_t} \x_t + \frac{1-\alpha_t}{\sqrt{\alpha}_t}\nabla_{\x_t}\log p(\x_t).\]Then, the backbone model is used to predict the score at time step $t$. The approximation becomes

\[\bm{\mu}_\theta = \frac{\x_t}{\sqrt{\bar{\alpha}_t}} + \frac{1-\bar{\alpha}_t}{\sqrt{\bar{\alpha}_t}}\bm{s}_\theta(\x_t, t).\]Finally, the objective becomes

\[\begin{align*} L_t &= \mathbb{E}_{\x_0, \bm{\epsilon}} \sbr{\frac{1}{2\sigma_t^2} \| \tilde{\bm{\mu}}_t(\x_t, \x_0) - \bm{\mu}_\theta(\x_t, t) \|^2 }\\ &= \mathbb{E}_{\x_0, \bm{\epsilon}} \sbr{\frac{1}{2\sigma_t^2}\frac{ (1 - \alpha_t)^2 }{\alpha_t} \|\bm{s}_\theta(\x_t, t) - \nabla_{\x_t}\log p(\x_t)\|^2}. \end{align*}\]Note that the true noise and the score looks very similar in the above equation. If we compare two type of $\x_0$ from score and noise, we have $\nabla_{\x_t}\log p(\x_t)=-\frac{1}{\sqrt{1-\bar{\alpha}}}\bm{\epsilon}$.

Guidance from Score

In guided diffusion models, we approximate the conditional data distribution $p(\x\mid y)$ instead of $p(\x)$. Hence, our goal is to learn the gradient $\nabla_{\x_t}\log p(\x_t\mid y)$ which can be written as

\[\begin{equation} \nabla_{\x_t}\log p(\x_t\mid y) = \underbrace{\nabla_{\x_t}\log p(y\mid\x_t)}_{\text{adversarial gradient}} + \underbrace{\nabla_{\x_t}\log p(\x)}_{\text{uncond. gradient}}. \end{equation}\]Classifier Guidance

In the classifier guidance, we have a classifier $\bm{C}_\phi$ which is used to predict the label $y$ given noised data $\x_t$. The gradient of the classifier is used as guidance, i.e.,

\[\begin{align*} \nabla_{\x_t}\log p(\x_t\mid y) &\approx \nabla_{\x_t}\log C_\phi(y\mid\x_t) + \bm{s}_\theta(\x_t, t)\\ &= \nabla_{\x_t}\log C_\phi(y\mid\x_t) - \frac{1}{\sqrt{1-\bar{\alpha}}}\bm{\epsilon}_\theta(\x_t, t)\\ &= - \frac{1}{\sqrt{1-\bar{\alpha}}} \underbrace{\nbr{\bm{\epsilon}_\theta(\x_t, t) - \sqrt{1-\bar{\alpha}}\nabla_{\x_t}\log C_\phi(y\mid\x_t)}}_{\text{new predictor}}. \end{align*}\]Note that $\nabla_{\x_t}\log p(\x_t)=-\frac{1}{\sqrt{1-\bar{\alpha}}}\bm{\epsilon}$. Then, the new predictor can be combined with a parameter $w$ to control the guidance strength, i.e.,

\[\begin{equation} \tilde{\bm{\epsilon}}(\x_t, t) = \bm{\epsilon}_\theta(\x_t, t) - w\sqrt{1-\bar{\alpha}}\nabla_{\x_t}\log C_\phi(y\mid\x_t), \end{equation}\]where we have the form

\[\nabla_{\x_t}\log p(\x_t) + \gamma \nabla_{\x_t}\log p(y\mid\x_t).\]One of the drawbacks of the classifier guidance is that the classifier has to be trained separately. In most of work, $\bm{\epsilon}_\theta(\x_t, t)$ is replaced by the conditioned version $\bm{\epsilon}_\theta(\x_t, t, y)$ in Eq. (11).

Classifier-free Guidance

To avoid the classifier, we need to reconsider the term $\nabla_{\x_t}\log p(y\mid\x_t)$. With Bayes’ theorem, we have

\[\begin{align*} \nabla_{\x_t}\log p(y\mid\x_t) &= \nabla_{\x_t}\log p(\x_t\mid y) - \nabla_{\x_t}\log p(\x_t)\\ &\approx \bm{s}_\theta(\x_t, t, y) - \bm{s}_\theta(\x_t, t)\\ &= -\frac{1}{\sqrt{1-\bar{\alpha}}}\nbr{\bm{\epsilon}_\theta(\x_t, t, y)-\bm{\epsilon}_\theta(\x_t, t)} \end{align*}\]The idea is that we use a new model $\bm{\epsilon}_\theta(\x_t, t, y)$ to learn the conditioned score. To obtain the approximated $\nabla_{\x_t}\log p(\x_t\mid y)$, we just add the unconditioned score, i.e.,

\[\begin{align*} \nabla_{\x_t}\log p(\x_t\mid y) &\approx \gamma\nabla_{\x_t}\log p(y\mid\x_t) + \nabla_{\x_t}\log p(\x_t)\\ &\approx -\frac{\gamma}{\sqrt{1-\bar{\alpha}}}\nbr{\bm{\epsilon}_\theta(\x_t, t, y)-\bm{\epsilon}_\theta(\x_t, t)} - \frac{1}{\sqrt{1-\bar{\alpha}}}\bm{\epsilon}_\theta(\x_t, t)\\ &=- \frac{1}{\sqrt{1-\bar{\alpha}}}\underbrace{\nbr{\gamma\bm{\epsilon}_\theta(\x_t, t, y) - (\gamma-1)\bm{\epsilon}_\theta(\x_t, t)}}_{\text{new predictor}}. \end{align*}\]If we set $\gamma=w+1$, we have the same form as the one in the classifier-free paper. Hence, the new predictor can be written as

\[\begin{equation} \tilde{\bm{\epsilon}}(\x_t, t, y) = (w+1)\bm{\epsilon}_\theta(\x_t, t, y) - w\bm{\epsilon}_\theta(\x_t, t). \end{equation}\]The advantage of the classifier-free guidance is that we do not need to train the classifier separately. The conditioned model $\bm{\epsilon}_\theta(\x_t, t, y)$ and unconditioned model $\bm{\epsilon}_\theta(\x_t, t, \emptyset)$ are learned jointly by “turn off” a certain ratio of the labels (looks like dropout normalization). For example, if we set the ratio (threshold) to $p$, we generate a mask mask = torch.rand(cemb.shape[0])<threshold where cemb is a batch of label embeddings. Then, we “turn off” these labels, i.e., cemb[np.where(mask)[0]] = 0. Finally, we add time embeddings and label embeddings, i.e., emb = cemb + temb to as the input to the backbone model.

Noise Conditional Score Networks (NCSN)

In Generative Modeling by Estimating Gradients of the Data Distribution, the authors propose a score-based model called Noise Conditional Score Networks (NCSN). To circumvent the direct computation of $Tr(\nabla_{\x}^2\log p_{\bm{\theta}}(\x))$, Denoising Score Matching or Sliced Score Matching are proposed. Here, we focus on the former. The idea is that we create a data distribution that very close to the true data distribution by perturbing the data $\x$ with a pre-specified noise distribution $q_{\sigma}(\tilde{\x}\mid\x)$ and the perturbed distribution is $q(\tilde{\x}) := \int p_{data}(x)q_{\sigma}(\tilde{\x}\mid\x)d\x$. To learn the score of data distribution, we minimize the Fisher divergence

\[\begin{align} D_F\sbr{q(\tilde{\x})\mid p_\theta(\tilde{\x})} &= \mathbb{E}_{q_{\sigma}(\tilde{\x})}\mathbb{E}_{p_{data}(\x)}\sbr{\frac{1}{2}\left\lVert\bm{s}_\theta(\tilde{\x}) - \nabla_{\tilde{\x}} q_{\sigma}(\tilde{\x})\right\rVert^2} \\ &= \mathbb{E}_{q_{\sigma}(\tilde{\x}\mid\x)}\mathbb{E}_{p_{data}(\x)}\sbr{\frac{1}{2}\left\lVert\bm{s}_\theta(\tilde{\x}) - \nabla_{\tilde{\x}} q_{\sigma}(\tilde{\x}\mid\x)\right\rVert^2 } + C \end{align}\]Note that $\bm{s}_{\theta^*}(\tilde{\x})=\nabla_{\x}q_\sigma(\tilde{\x})$ almost surely by minimizing Eq. (14). However, $\bm{s}_{\theta^*}(\tilde{\x}) = \nabla_{\tilde{\x}} q_{\sigma}(\tilde{\x}) \approx \nabla_{\x}\log p_{data}(\x)$ is true only when the noise level $\sigma$ is small enough, which leads to $q_{\sigma}(\tilde{\x}) \approx p_{data}(\x)$.

There are two problems in score matching:

- Inaccurate score estimation in low data density regions: the data often concentrate on low dimensional manifolds embedded in a high dimensional space (a.k.a., the ambient space). It means that the data don’t cover the whole $\mathbb{R}^D$ space. Hence, the score estimation is inaccurate in the low data density regions. Since the sampling is a iterative process, the inaccurate score estimation will lead to a biased sampling process.

- Slow mixing of Langevin dynamics: sampling process may be very slow if the distribution has 2 modes and they are separated by a low density region. The Langevin dynamics may be trapped in the low density region and cannot move to the other mode.

In NCSN, data are perturbed by different levels of noise. Let $\sigma_1>\sigma_2>…>\sigma_L$ be the noise levels which is a positive geometric sequence. The perturbed data distribution is written as $q_{\sigma_i}(\tilde{\x})=\int p_{data}(\x)p(\tilde{\x} \mid \x)d\x = \int p_{data}(\x)\N(\tilde{\x}\mid \x, \sigma_i) d\x $. We choose the noise function $q_{\sigma_i}(\tilde{\x} \mid \x) = \N(\x,\sigma_i^2\I)$ and $\nabla_{\tilde{\x}}\log q_{\sigma_i}(\tilde{\x}\mid \x) = -\frac{\tilde{\x}-\x}{\sigma_i^2}$. Intuitively, the perturbed data with different noise levels fill the low density regions. It may be regarded as a data augmentation technique. Also, these perturbed data build a “tunnel” between the true data distribution and the prior distribution ($\N(0,I)$), which helps improve the mixing rate of Langevin dynamics on multimodal distributions.

For a given noise level $\sigma_i$, the objective is

\[\ell_i(\theta;\sigma_i) = \mathbb{E}_{q_{\sigma_i}}\mathbb{E}_{p_{data}}\sbr{\frac{1}{2}\left\lVert\bm{s}_\theta(\tilde{\x},\sigma_i) + \frac{\tilde{\x}-\x}{\sigma_i^2}\right\rVert^2_2}.\]Then, the total objective is

\[\mathcal{L}(\theta) = \sum_{i=1}^L\lambda(\sigma_i)\ell_i(\theta;\sigma_i).\]The author found that $\left\lVert\bm{s}_\theta(\tilde{\x},\sigma_i)\right\rVert_2\propto \frac{1}{\sigma_i}$. To balance the contribution of each noise level, the weight $\lambda(\sigma_i)$ is set to $\sigma_i^2$ to make the objective scale-invariant $\frac{\tilde{\x}-\x}{\sigma_i}\sim \N(0,\I)$.

Langevin Dynamics (SDE)

It can be represented as Ito’s diffusion

\[\begin{aligned} d\x_t &= -\nabla_{\x}V(\x)dt + \sqrt{2}d\bm{w}_t \\ &= -\nabla_{\x}V(\x)dt + \sqrt{2dt}\bm{z}_t, \quad \bm{z}_t\sim\N(0,\I). \end{aligned}\]where $\bm{w}_t\sim\N(0,\bm{t})$ is the standard Wiener process and $V(\x)$ is the potential energy. The steady state distribution of the above SDE can be determined by the Fokker-Plank equation which is a partial differential equation (PDE) that describes the evolution of a probability distribution over time under the effect of drift forces and random (or noise) forces. To be more specific, we want to find the stationary distribution $p(\x)$ that satisfies the following equation

\[\frac{\partial p(\x,t)}{\partial t} = \frac{\partial }{\partial \x}\Big(\frac{\partial V(\x)}{\partial \x}p(\x,t)\Big) + \frac{\partial^2 p(\x,t)}{\partial \x^2} = 0\]which has the equilibrium distribution $p(\x) \propto e^{-V(\x)}$. We hope that the distribution $p(\x)$ is close to the true data distribution $p_{data}(\x)$ in our case. Recall that $p_\theta(\x) = \frac{e^{-f_\theta(\x)}}{Z_\theta}$, we can set $V(\x) = f_\theta(\x)$ and then $\nabla_{\x}V(\x) = \bm{s}_\theta(\x)$.

Sampling

The discretization of the above SDE can be written as

\[\x_{t+\epsilon} = \x_{t} - \epsilon\bm{s}_{\theta}(\x) + \sqrt{2\epsilon}\bm{z}_t.\]The sampling can be done by iteratively updating the data $\x$ with the above equation. In NCSN, the sampling process is slightly modified by using different step length at each noise level (annealed Langevin dynamics). The step length is set to $\alpha_i = \epsilon \frac{\sigma_i}{\sigma_L}$. The sampling process is

\[\x_{t} \leftarrow \x_{t-1} + \frac{\alpha_i}{2}\bm{s}_\theta(\x,\sigma_i) + \sqrt{\alpha_i}\bm{z}_i \quad t=0,1,...,T, \quad i=1,...,L.\]Note that there are 2 loops in the sampling process. The outer loop is for the noise level and the inner loop is for the Langevin dynamics.